Automated vs. Manual Testing for Mobile Apps: Finding the Right Balance

Today’s chosen theme: Automated vs. Manual Testing for Mobile Apps. Discover a practical, inspiring path to faster releases, fewer regressions, and better user experiences by blending human insight with dependable automation. Share your experiences in the comments and subscribe for fresh, battle-tested mobile quality strategies.

What Each Approach Really Means

Manual testing, clearly defined

Manual testing is a human-led exploration of the app, where empathy, curiosity, and context drive discovery. It shines at usability, accessibility, and nuanced behaviors that scripts miss. Skilled testers use charters, sessions, and notes to follow signals, question assumptions, and reveal surprising edge cases unique to real users.

Automation, clearly defined

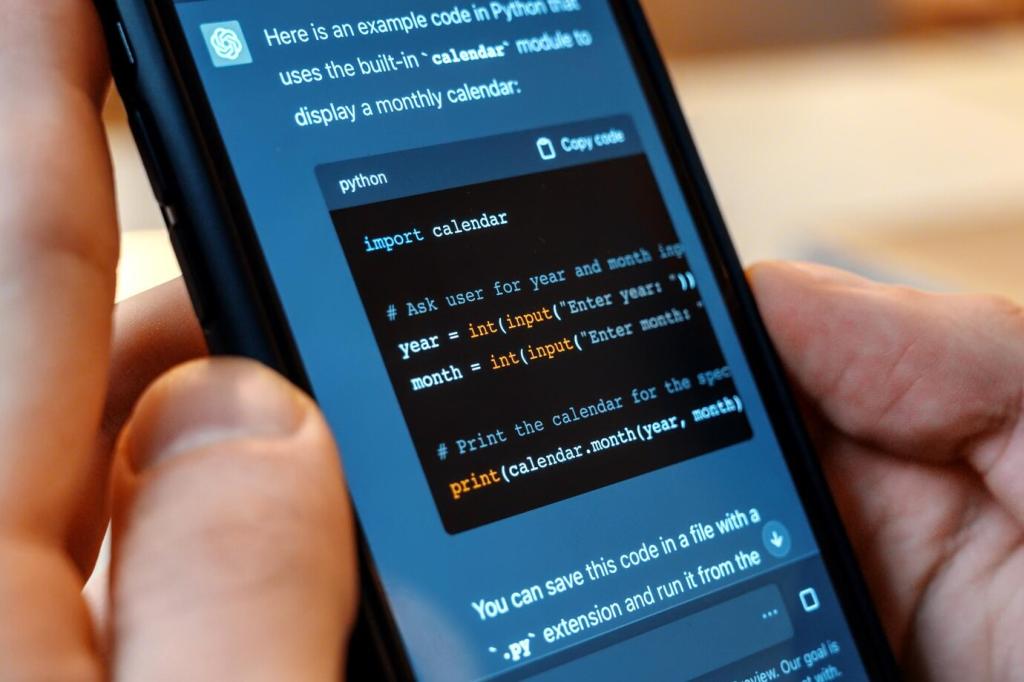

Automation executes repeatable checks quickly and consistently, making it ideal for regression, smoke, and performance baselines. It connects to CI, runs on every commit, and scales across devices. When designed with stable locators, clear reporting, and smart retries, it safeguards core flows without slowing the team.

The pragmatic blend

A balanced strategy reserves automation for predictable, high-value paths, while manual testers pursue discovery, usability, and risk-based deep dives. Think in layers: unit and API checks for speed, targeted UI automation for coverage, and exploratory sessions for insight. Comment if you’ve found a split that truly fits your product.

Automate login, onboarding, checkout, and critical settings where regressions are costly. Keep locators resilient with accessibility identifiers and avoid over-asserting UI details. Favor deterministic data, mock external dependencies, and design tests to fail loudly and meaningfully, so developers can fix issues without guessing.

Deciding What to Test Manually vs Automatically

Speed, Cost, and ROI—with Real Numbers

Every added UI test increases pipeline time and cloud usage. Keep a tight smoke suite on pull requests, run broader suites nightly, and isolate long-running scenarios. Measure median runtime, queue delays, and retry rates to ensure automation helps your cadence instead of silently slowing releases.

Speed, Cost, and ROI—with Real Numbers

Flaky tests erode trust, cause reruns, and mask real defects. Track flake frequency, quarantine responsibly, and fix root causes promptly. The cost of ignoring flakiness is higher than removing a test that provides little signal. Share your worst flake story to help others avoid similar traps.

Real-World Stories: Where Humans or Robots Win

A tester riding the subway noticed a crash when a notification arrived mid-payment at three percent battery in dark mode. No script covered that combination, but a curious human did. The bug would have hit a busy shopping weekend. Manual attention rescued a painful, public incident.

Real-World Stories: Where Humans or Robots Win

An automated suite running overnight on devices set to different regions flagged a login token expiry mismatch. The issue reproduced only across daylight saving boundaries. Because the test ran unattended in CI, the team fixed it before users across continents woke up to broken sessions.

Real-World Stories: Where Humans or Robots Win

A startup trimmed a bloated UI suite to a sharp smoke set, moved logic to API tests, and doubled down on exploratory charters for new features. Release time dropped by two hours, and user-reported regressions fell. The lesson: align tests to risk and purpose, not habit.

Pull request gates and smoke checks

Gate merges with a fast, reliable smoke on representative devices. Fail quickly with actionable logs and screenshots. Keep the suite under a strict runtime budget so developers do not bypass it. Add nightly wider runs to catch slower issues without slowing daily momentum.

Parallelization, caching, and sharding

Split tests across devices and executors, cache builds and dependencies, and reuse app artifacts. Shard by runtime history to balance load. This engineering discipline can cut UI runtime dramatically, turning automation from a bottleneck into a background guardian that teams trust every day.

Results, triage, and accountability

Publish dashboards with trends, flake rates, and failure ownership. Start a daily ten-minute triage to classify failures, file issues, and assign follow-ups. Celebrate zero-flake days, and invite readers to share how they keep accountability humane, visible, and genuinely helpful to the whole team.